1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

| import torch

from torch import nn

import numpy as np

import matplotlib.pyplot as plt

import os

TIME_STEP = 10

INPUT_SIZE = 1

LR =0.02

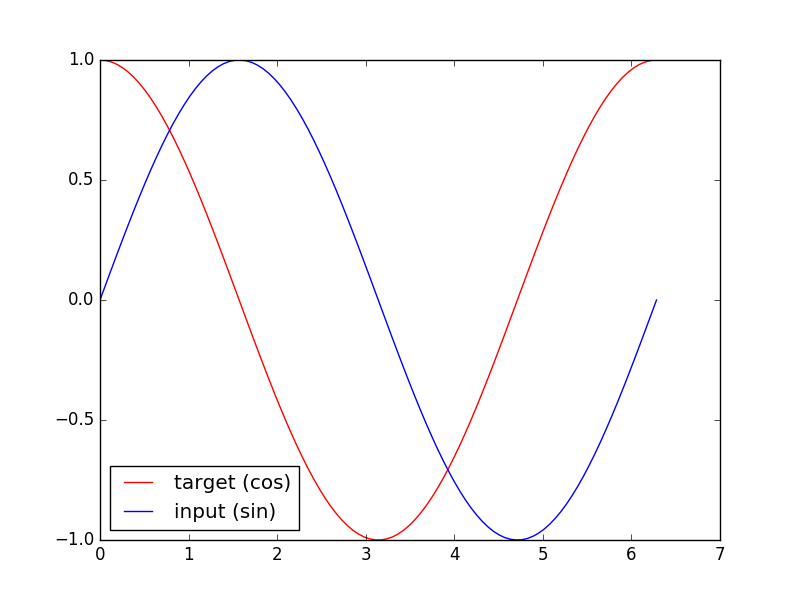

steps = np.linspace(0, np.pi*2, 100, dtype=np.float32)

x_np = np.sin(steps)

y_np = np.cos(steps)

plt.plot(steps, y_np, 'r-', label='target (cos)')

plt.plot(steps, x_np, 'b-', label='input (sin)')

plt.legend(loc='best')

plt.show()

class RNN(nn.Module):

def __init__(self):

super(RNN, self).__init__()

self.rnn = nn.RNN(

input_size=INPUT_SIZE,

hidden_size=32,

num_layers=1,

batch_first=True,

)

self.out = nn.Linear(32, 1)

def forward(self, x, h_state):

r_out, h_state = self.rnn(x, h_state)

outs = []

for time_step in range(r_out.size(1)):

outs.append(self.out(r_out[:, time_step, :]))

return torch.stack(outs, dim=1), h_state

rnn = RNN()

print(rnn)

optimizer = torch.optim.Adam(rnn.parameters(), lr=LR)

loss_func = nn.MSELoss()

h_state = None

plt.figure(1, figsize=(12, 5))

plt.ion()

for step in range(100):

start, end = step * np.pi, (step+1)*np.pi

steps = np.linspace(start, end, TIME_STEP, dtype=np.float32, endpoint=False)

x_np = np.sin(steps)

y_np = np.cos(steps)

x = torch.from_numpy(x_np[np.newaxis, :, np.newaxis])

y = torch.from_numpy(y_np[np.newaxis, :, np.newaxis])

prediction, h_state = rnn(x, h_state)

h_state = h_state.data

loss = loss_func(prediction, y)

optimizer.zero_grad()

loss.backward()

optimizer.step()

plt.plot(steps, y_np.flatten(), 'r-')

plt.plot(steps, prediction.data.numpy().flatten(), 'b-')

plt.draw(); plt.pause(0.05)

plt.ioff()

plt.show()

|